Hello,

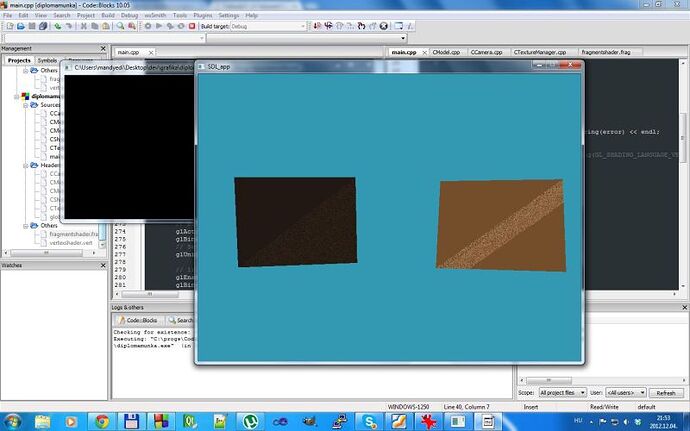

This problem may not be related directly to shaders, but I don’t even know exactly where the problem is. Since I moved from fixed pipeline to shaders, the texture appears wrong. Here is a screenshot about it:

(The model has 2 planes which appears right, only the texture is wrong)

Some information about my code: I wrote an exporter for Blender which creates a binary model file. I wrote a loader for it. The model file can handle multiple objects (meshes) in one model. Each object has different texture. The first version uses VBO without shaders and works pretty well. After that I studied how to use shaders I changed my code based on this tutorial: http://www.opengl-tutorial.org/beginners-tutorials/tutorial-5-a-textured-cube/

I think the main problem is that I don’t know the concept of drawing a complex model with multiple object and texture. Most of the articles and tutorials shows how to draw one object.

Here is my drawing function. I call this in the main loop after the keyboard handling and camera update.

void CModel::draw(glm::mat4 &MVP)

{

glActiveTexture(GL_TEXTURE0);

for(unsigned int i=0; i<m_numObjects; i++)

{

glUseProgram(m_programID);

unsigned int matrixUniform = glGetUniformLocation(m_programID, "MVP");

unsigned int textureUniform = glGetUniformLocation(m_programID, "myTextureSampler");

glUniformMatrix4fv(matrixUniform, 1, GL_FALSE, &MVP[0][0]);

glBindTexture(GL_TEXTURE_2D, m_textureIDs[i]);

glUniform1i(textureUniform, 0);

glBindBuffer(GL_ARRAY_BUFFER, m_bufferIDs[i]);

// vertices

glEnableVertexAttribArray(0);

glVertexAttribPointer(

0, // must match the layout in the shader.

3, // size

GL_FLOAT, // type

GL_FALSE, // normalized?

sizeof(float)*8, // stride

(void*)0 // array buffer offset

);

// UVs

glEnableVertexAttribArray(1);

glVertexAttribPointer(

1,

2,

GL_FLOAT,

GL_FALSE,

sizeof(float)*8,

(void*)6

);

glDrawArrays(GL_TRIANGLES, 0, m_numElements[i]);

glDisableVertexAttribArray(0);

glDisableVertexAttribArray(1);

glUseProgram(0);

}

//glDisable(GL_TEXTURE_2D);

}

The data array which is sent to the buffer has this format: [vertex.x, vertex.y, vertex.z normal.x, normal.y, normal.z, textureCoord.u, textureCoord.v, …]

The vertex and fragment shaders worked in the first version. Here are the shaders, but I don’t think it should be changed in any way.

#version 330 core

// Input vertex data, different for all executions of this shader.

layout(location = 0) in vec3 vertexPosition_modelspace;

layout(location = 1) in vec2 vertexUV;

// Output data ; will be interpolated for each fragment.

out vec2 UV;

// Values that stay constant for the whole mesh.

uniform mat4 MVP;

void main(){

// Output position of the vertex, in clip space : MVP * position

gl_Position = MVP * vec4(vertexPosition_modelspace,1);

// UV of the vertex. No special space for this one.

UV = vertexUV;

}

#version 330 core

// Interpolated values from the vertex shaders

in vec2 UV;

// Ouput data

out vec3 color;

// Values that stay constant for the whole mesh.

uniform sampler2D myTextureSampler;

void main(){

// Output color = color of the texture at the specified UV

color = texture2D( myTextureSampler, UV ).rgb;

}

help we take simple code with our dr but he gave us a complex one to put comment on it and describe work each function

help we take simple code with our dr but he gave us a complex one to put comment on it and describe work each function