Sigh…

My app is now coded to save the contents of a 3D view, or a selected portion of it, to a disk file.

I generate a polygon mesh to a display list using triangle strips, then render it with a perspective projection with GL_LIGHTING, GL_DEPTH_TEST, GL_COLOR_MATERIAL and GL_SMOOTH all turned on.

If FBOs are supported at runtime, the user is given an option to create an image that’s bigger than the view on the screen (and even bigger than the entire screen.)

If the user requests to save an image bigger than the current view, I create an FBO object and render to that.

I then copy either the contents of the front colorbuffer or the FBO into an image in main memory using glReadPixels.

All this works beautifully, except that my images are strangely and subtly distorted if the output goes through an FBO.

I added a “force FBOs” flag for testing so I could generate exactly the same image directly from the front color buffer or through an FBO.

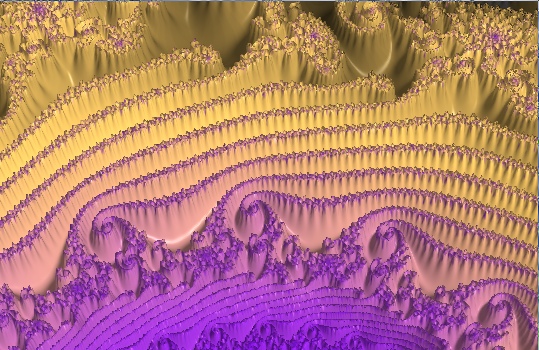

Here is how the image SHOULD look: (created without FBOs, by doing a glReadPixels directly from the front buffer)

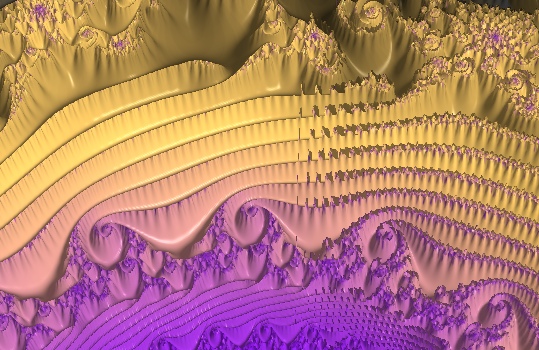

And here’s how it looks when the output is rendered to an FBO:

Notice how the ridges of my 3D object (a fractal) are fairly uniformly bumpy from left to right across the middle of the first image.

On the image which is rendered to an FBO, the ridges start out looking too smooth on the left of the image, and have odd artifacts on the right side.

When I get ready to save my image to disk I’ve just rendered it to the back color buffer and then copied it to the front color buffer. Thus if I’m doing glReadPixels from my regular color buffer, I don’t have to do anything.

If I’m re-rendering to an FBO, I generate the FBO, bind it, generate a renderbuffer, bind that, allocate storage in the renderbuffer, and then just do a glClear and render my mesh object. I don’t set up my projection, specify my lighting or shading options, or any of that, because it is already set up.

Do I need to specify all my options again before drawing to the FBO?

Here’s the code I use to set up my FBO for drawing:

//Set up a FBO with one renderbuffer attachment

glGenFramebuffersEXT( 1,

&framebuffer); //Generate a new framebuffer id

glBindFramebufferEXT( GL_FRAMEBUFFER_EXT,

framebuffer); //Bind to it

glGenRenderbuffersEXT( 1, &renderbuffer); //Generate a new renderbuffer id

glBindRenderbufferEXT( GL_RENDERBUFFER_EXT,

renderbuffer); //Bind the renderbuffer

glRenderbufferStorageEXT( GL_RENDERBUFFER_EXT,

GL_RGB,

save_width,

save_height); //Create storage for the renderbuffer object

glFramebufferRenderbufferEXT(

GL_FRAMEBUFFER_EXT,

GL_COLOR_ATTACHMENT0_EXT,

GL_RENDERBUFFER_EXT,

renderbuffer); //Install the renderbuffer in the framebuffer?

status = glCheckFramebufferStatusEXT(GL_FRAMEBUFFER_EXT); //Make sure the framebuffer is "complete" (ready for drawing)

if (status != GL_FRAMEBUFFER_COMPLETE_EXT)

{ //Error. clean up before returning

//unbind the frame buffer.

glBindFramebufferEXT(GL_FRAMEBUFFER_EXT, 0);

glDeleteRenderbuffersEXT(1, &renderbuffer);

NSLog(@"Error. Frame buffer not complete.");

return nil;

}

if (!save_selection)

glViewport (0, 0, openGLRect.size.width, openGLRect.size.height);

else

{// shift and scale our drawing

scale_factor = ((float)save_width) / view_selection_rect.size.width;

glViewport (-view_selection_rect.origin.x * scale_factor,

-view_selection_rect.origin.y * scale_factor,

camera.viewWidth * scale_factor,

camera.viewHeight * scale_factor );

}

glClear (GL_COLOR_BUFFER_BIT | GL_DEPTH_BUFFER_BIT);

[theMeshObject drawMesh];

glPixelStorei(GL_PACK_ALIGNMENT, 8);

glReadPixels( openGLRect.origin.x,

openGLRect.origin.y,

openGLRect.size.width,

openGLRect.size.height,

GL_RGB,

GL_UNSIGNED_BYTE,

theRepData);

Note that the code that scales the viewport isn’t changing the scale at all in my simple test. I can get the odd distortion even if I render the whole viewport without any scaling.